13. Execution Environments

The ability to build and deploy Python virtual environments for automation has been replaced by Ansible execution environments. Unlike legacy virtual environments, execution environments are container images that make it possible to incorporate system-level dependencies and collection-based content. Each execution environment allows you to have a customized image to run jobs, and each of them contain only what you need when running the job, nothing more.

13.1. Building an Execution Environment

The Getting started with Execution Environments guide will give you a brief technology overview and show you how to build and test your first execution environment in a few easy steps.

13.2. Use an execution environment in jobs

In order to use an execution environment in a job, a few components are required:

Use the AWX user interface to specify the execution environment you build to use in your job templates.

Depending on whether an execution environment is made available for global use or tied to an organization, you must have the appropriate level of administrator privileges in order to use an execution environment in a job. Execution environments tied to an organization require Organization administrators to be able to run jobs with those execution environments.

Before running a job or job template that uses an execution environment that has a credential assigned to it, be sure that the credential contains a username, host, and password.

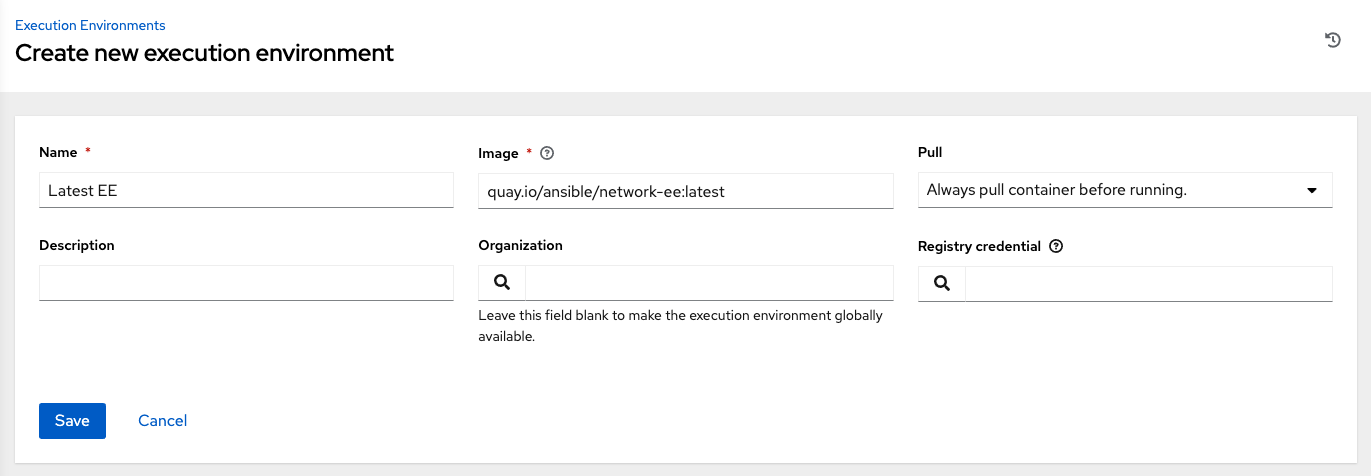

Click Execution Environments from the left navigation bar of the AWX user interface.

Add an execution environment by selecting the Add button.

Enter the appropriate details into the following fields:

Name: Enter a name for the execution environment (required).

Image: Enter the image name (required). The image name requires its full location (repo), the registry, image name, and version tag in the example format of

quay.io/ansible/awx-ee:latestrepo/project/image-name:tag.Pull: optionally choose the type of pull when running jobs:

Always pull container before running: Pulls the latest image file for the container.

Only pull the image if not present before running: Only pulls latest image if none specified.

Never pull container before running: Never pull the latest version of the container image.

Description: optional.

Organization: optionally assign the organization to specifically use this execution environment. To make the execution environment available for use across multiple organizations, leave this field blank.

Registry credential: If the image has a protected container registry, provide the credential to access it.

Click Save.

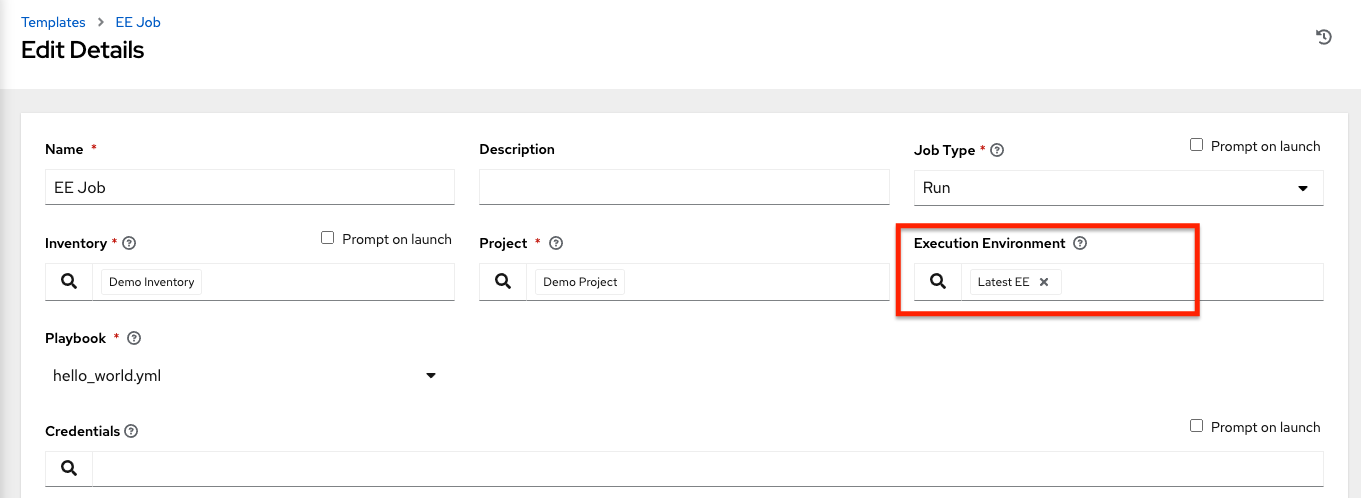

Now your newly added execution environment is ready to be used in a job template. To add an execution environment to a job template, specify it in the Execution Environment field of the job template, as shown in the example below. For more information on setting up a job template, see Job Templates in the AWX User Guide.

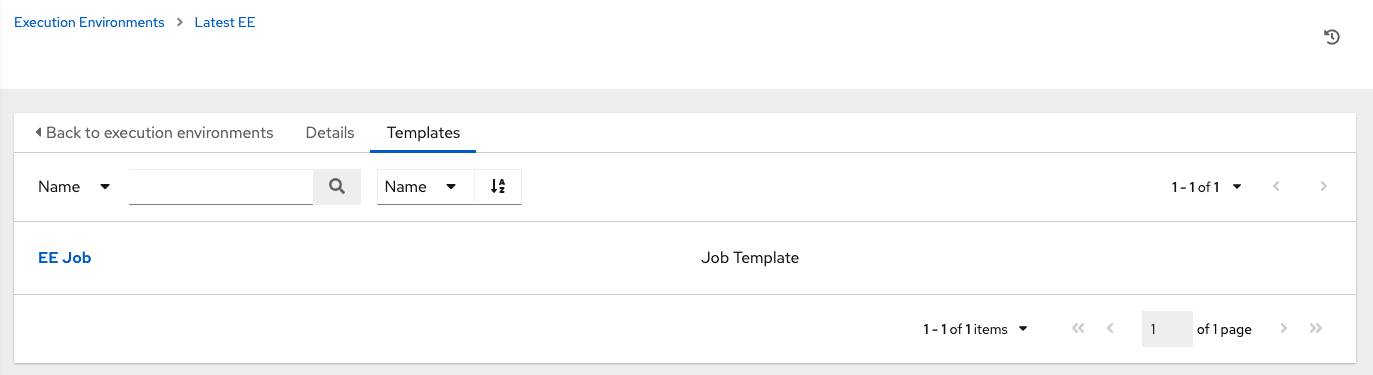

Once you added an execution environment to a job template, you can see those templates listed in the Templates tab of the execution environment:

13.3. Execution environment mount options

Rebuilding an execution environment is one way to add certs, but inheriting certs from the host provides a more convenient solution.

Additionally, you may customize execution environment mount options and mount paths in the Paths to expose to isolated jobs field of the Job Settings page, where it supports podman-style volume mount syntax. Refer to the Podman documentation for detail.

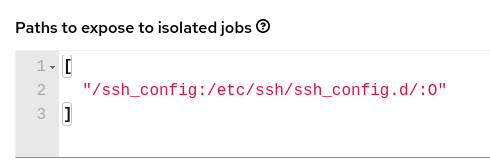

In some cases where the /etc/ssh/* files were added to the execution environment image due to customization of an execution environment, an SSH error may occur. For example, exposing the /etc/ssh/ssh_config.d:/etc/ssh/ssh_config.d:O path allows the container to be mounted, but the ownership permissions are not mapped correctly.

If you encounter this error, or have upgraded from an older version of AWX, perform the following steps:

Change the container ownership on the mounted volume to

root.In the Paths to expose to isolated jobs field of the Job Settings page, using the current example, expose the path as such:

Note

The :O option is only supported for directories. It is highly recommended that you be as specific as possible, especially when specifying system paths. Mounting /etc or /usr directly have impact that make it difficult to troubleshoot.

This informs podman to run a command similar to the example below, where the configuration is mounted and the ssh command works as expected.

podman run -v /ssh_config:/etc/ssh/ssh_config.d/:O ...

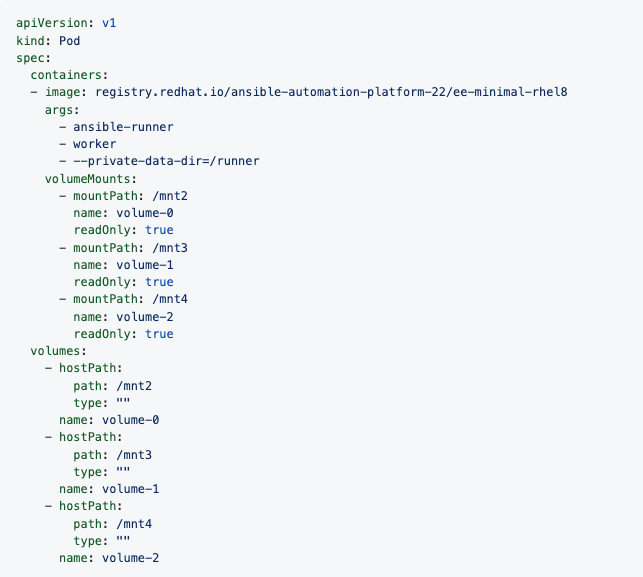

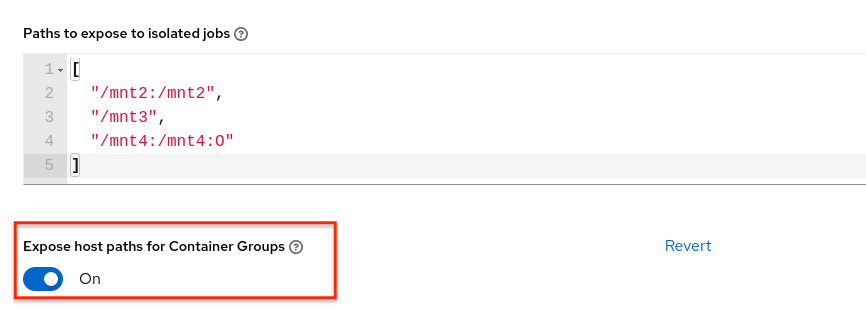

To expose isolated paths in OpenShift or Kubernetes containers as HostPath, assume the following configuration:

Use the Expose host paths for Container Groups toggle to enable it.

Once the playbook runs, the resulting Pod spec will display similar to the example below. Note the details of the volumeMounts and volumes sections.